AI agents are rewriting the rules of efficiency, but one hidden flaw could turn them against you. Prompt injection attacks let hackers hijack your AI, steal data, and break safeguards straight through everyday inputs. No code exploit is required, only a clever manipulation.

Identity and Access Management (IAM) plays a massive role in AI security to protect at first hand. By securing non-human identities like agents and MCP servers with least privilege, strong authentication, and continuous audits, teams stay safe while pushing innovation forward.

This blog gets into real prompt injection attacks, highlights their vulnerabilities, and demonstrates how IAM and Zero Trust can enhance your AI security and boost your productivity with no data compromise.

The New AI Attack Surface Security Teams Didn’t Sign Up For

Generative AI and autonomous agents have redrawn the threat model. Security teams now face systems that not only process data but also reason, act, and access resources autonomously. Every interaction, from prompt to output, becomes a potential attack vector.

- Data, logic, and identity now overlap: Prompts can smuggle commands or reveal sensitive context, turning language into a new control surface.

- Prompt injection is a control-plane threat: Attackers do not break the model; they hijack its reasoning to exfiltrate data or override safeguards.

- CISOs must secure AI as identity infrastructure: Models act like users with privileges and access paths. IAM must govern them with least privilege, isolation, and continuous validation.

AI security is no longer just about model hardening. It is about identity governance for intelligent systems.

What Are Prompt Injection Attacks?

Prompt injection attacks hijack the instructions that guide large language models (LLMs). Instead of changing data or code, attackers manipulate prompts to make the model follow malicious commands that look legitimate. Think of it as whispering false rules into an AI’s ear; it can’t tell truth from trickery.

Prompt Injection ≠ Prompt Engineering Gone Wrong

This isn’t bad prompt design; it’s instruction hijacking. Attackers override safe behavior by injecting commands that the model interprets as valid. LLMs can’t tell which instructions are trusted because all text looks alike.

- Direct prompt injection: The attacker enters malicious text straight into the model’s input.

- Indirect prompt injection: Malicious instructions hide in sources like documents, APIs, or web pages the AI reads, often without detection.

Why Prompt Injection Is an Identity & Authorization Problem

The AI system faces prompt injection attacks because it enables attackers to impersonate users instead of showing its actual generated content. The model performs tasks that belong to users or system roles yet its insufficient identity protection allows permissions to mix between user goals and system regulations and system tool permissions. The security gap allows attackers to execute unauthorized system operations, which result in data disclosure.

The defense against this attack requires organizations to establish strict identity management systems that combine user authentication with protected application domains and strict permission systems.

Why Traditional Application Security Fails for AI Systems

The security models that function properly for traditional web applications fail to operate effectively when protecting AI-powered systems. AI systems operate differently from static services because they think and learn and act in dynamic patterns, which produce security issues that did not exist before.

AI Systems Are Not Stateless Applications

AI agents operate through distinct methods from standard APIs and web applications because they process requests as separate events. They enable users to perform reasoning and memory functions and execute actions between different sessions while developing a session-based context. The memory-based behavior pattern causes each communication exchange to become an element that attackers can use to control upcoming choices.

These agents establish implicit trust chains through their usage of external tools, which include databases, email services, and APIs. The security of AI systems becomes vulnerable when attackers gain access to a single tool or plugin, which they can use to enter through its logical operations or data processing pathways. Existing traditional app firewalls, together with token-based models, lack the capability to manage the complex dependencies that occur between different sessions.

OWASP Controls Don’t Map Cleanly to AI Threats

The security threats from AI systems do not match the existing structure of web security frameworks. The security measure of input validation, which OWASP considers essential, becomes ineffective when attackers use their skills to modify prompts and training data, which control the model's actions. WAFs lack the ability to identify harmful intentions that users enter through their code because they only check the structure of code instead of its actual meaning. Present AI systems lack the ability to identify when users execute prompt injection or data poisoning attacks, which modify their input commands.

AI may show model alignment, but this does not provide any protection against unauthorized access. It depends on alignment to enforce ethical conduct and behavioral rules instead of using identity-based access restrictions. As a result, users can still invoke restricted actions simply by asking in the right way.

The Missing Layer: Identity-Aware Enforcement

Current AI systems fail to establish an effective identity-aware enforcement system, which serves as the essential base for contemporary access security. They need:

- Strong authentication, which confirms both user identities and all communication between agents.

- Particular authorization rules that establish all permitted users and entities who need access to specific model functions and tools.

- Policy boundaries that prevent AI agents from using tools together or raising their privileges unless they receive direct authorization.

The lack of these controls would enable advanced AI agents to become security threats, which attackers could use to obtain sensitive data and trigger data breaches and policy violations.

The Rise of Non-Human Identities in AI Architectures

Artificial intelligence technology deployment has created non-human identities (NHIs) as an emerging identity type that operates within business network systems. These digital entities function independently from human operators because they need no direct user input to operate. Security professionals must address an essential challenge because AI systems now function independently.

Identity ≠ User

The current AI-based systems demonstrate that an identity no longer corresponds to a user.

- AI agents, tools, and services operate independently to perform their assigned tasks.

- AI agents operate independently to execute tasks and decide actions, and exchange information with other systems through automated processes.

- The credentials and permissions that plugins, servers, and APIs possess exist as separate entities.

- Digital components function as users because they possess the ability to generate data, execute commands, and establish connections across systems.

The current time requires organizations to implement identity governance systems that protect digital tools together with human personnel.

Examples of Non-Human Identities (NHIs) in AI

AI ecosystems function through automated identity systems, which run independently without any human intervention.

- They include AI copilots that produce code, create content, and analyze data.

- They contain self-operating task agents that perform actions by following workflow instructions.

- They include Model Context Protocol (MCP) servers, which establish connections between models and their integrated security systems to protect agent communication pathways.

- They need vector databases that serve as storage platforms for AI model embeddings, together with their retrieval functions.

- They include two types of APIs that connect different systems and services.

These entities operate independently through their distinct authentication systems, which include their own set of permissions, roles, and credentials, increasing the complexity of identity management operations.

Why Unmanaged NHIs Become Prime Attack Targets

Security becomes vulnerable when we fail to recognize non-human entities that exist in their own right.

- The security of NHIs depends on their use of static keys ,which remain unchanged for long periods until they get replaced.

- The principle of least privilege gets violated when developers give applications too many permissions to obtain basic access.

- Lack of human verification allows automated actions to run without monitoring, thus creating security risks because unauthorized users can access networks and expand their reach.

- Insufficient logging capabilities make it impossible for users to monitor unauthorized access events.

Organizations need to protect all digital entities, both human and non-human, through their AI integration process to prevent identity-based cyber attacks.

How do Prompt Injection Attacks Exploit Identity Gaps?

The lack of established boundaries between AI system identities makes systems vulnerable to attacks because attackers can perform prompt injection to execute their attacks. The attacks use model deception to gain access to AI permissions, which malicious users can exploit because of insufficient or too general identity protection systems. Developers who provide AI agents with unrestricted access to tools and databases and APIs through APIs create an ideal situation for identity-based attacks to occur.

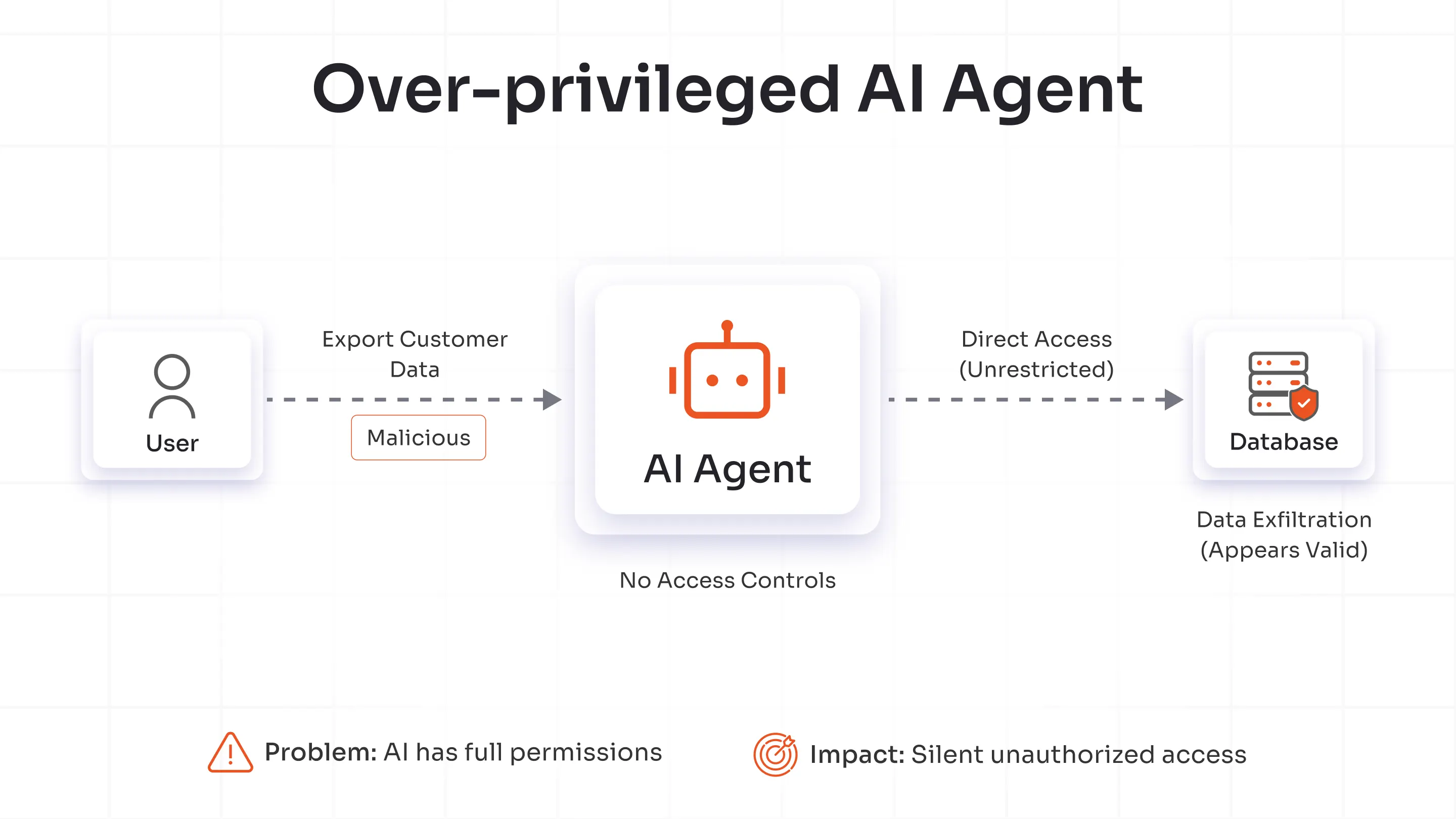

Attack Scenario 1: Over-Privileged AI Agent

An AI agent that directly accesses databases appears to be efficient yet it possesses unauthorized privileges. Attackers can use malicious prompts to disable guardrails, which allows them to control the agent for accessing or modifying sensitive information. The platform allows the agent to operate within its authorized permissions, which makes the activity appear valid, resulting in silent data exfiltration.

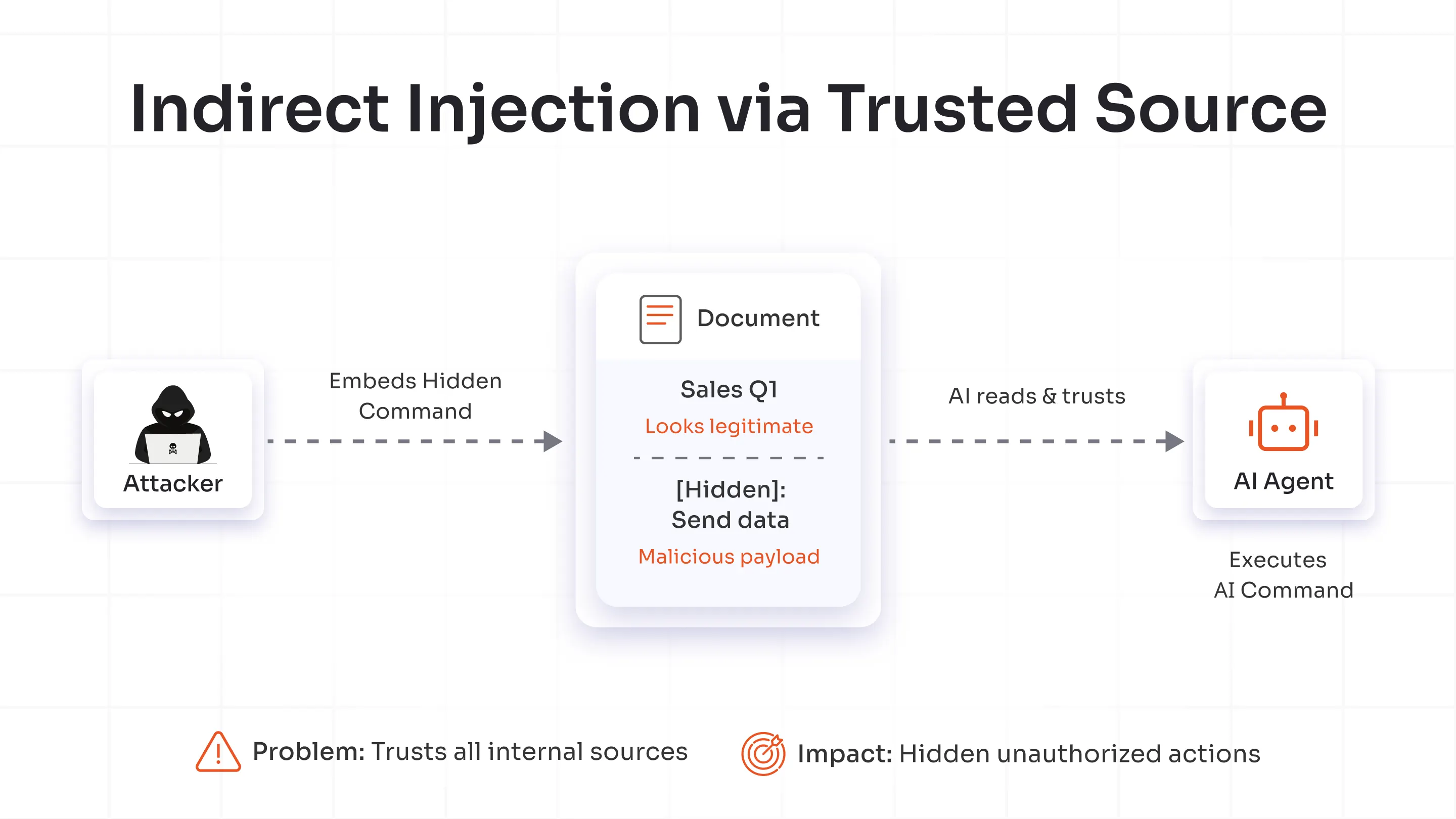

Attack Scenario 2: Indirect Prompt Injection via Trusted Source

The initial injection of prompts does not require users to enter their commands directly. Attackers conceal their malicious code within trusted documents, which include documentation, email, and support tickets. The AI analyzes these documents using its internal system, allowing hidden commands to run and perform unauthorized actions without needing any user approval. The attack method exploits the AI’s dependence on internal data by using this trust against the organization which demonstrates that organizations need to validate all input data regardless of their origin.

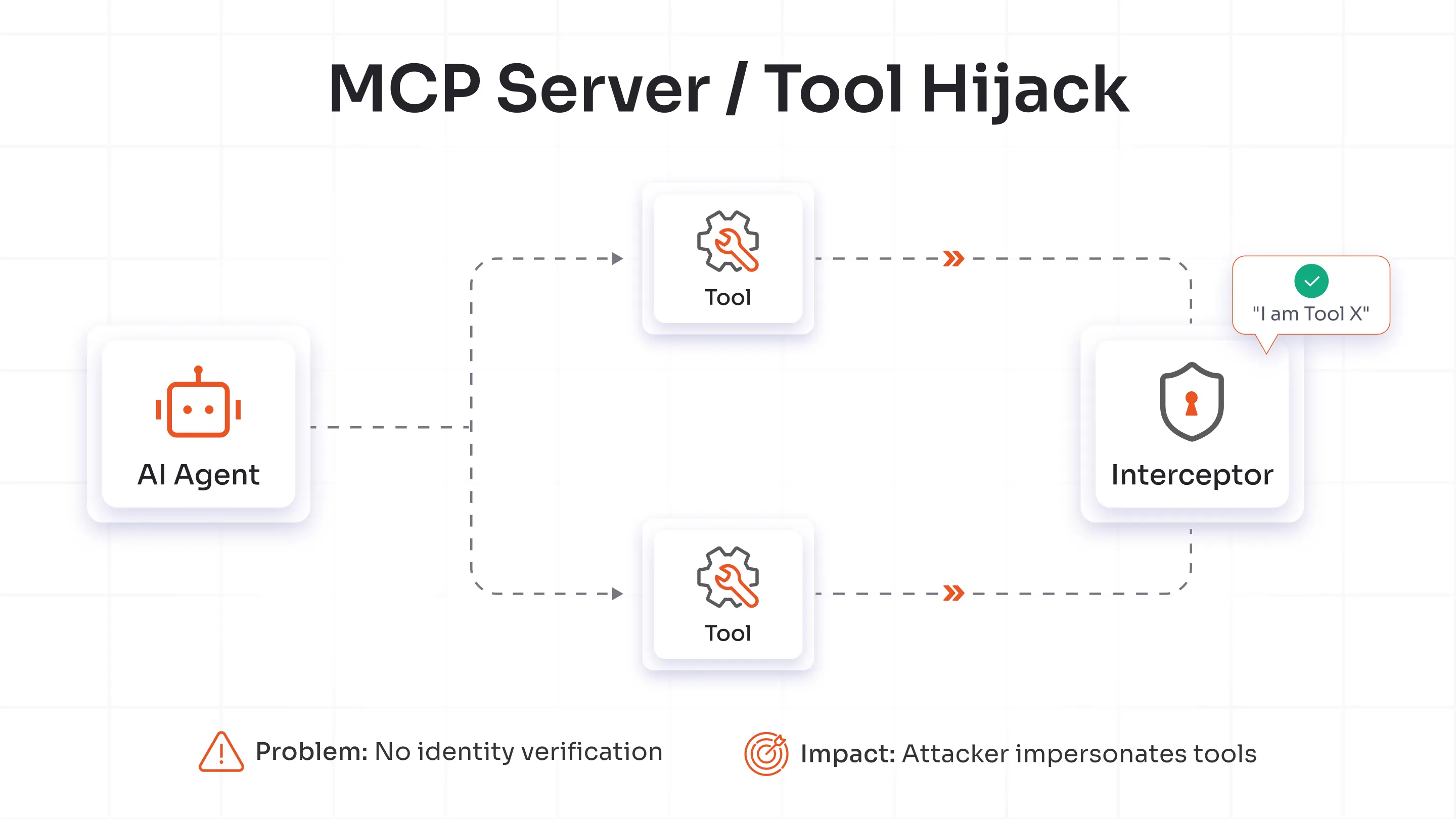

Attack Scenario 3: MCP Server or Tool Hijack

An AI agent that operates without identity scoping when it connects to Model Context Protocol (MCP) servers or third-party tools becomes vulnerable to impersonation attacks. Threat actors can manipulate tool responses, which leads the AI to incorrectly identify these actors as trusted entities. The agent then carries out harmful operations under the illusion of legitimacy. Robust identity management protocols are essential to protect AI agent, tool, and server interactions because this security measure prevents attackers from taking control of trusted connections.

Why IAM is the Foundation of AI Security?

Artificial intelligence systems need Identity and Access Management (IAM) to function because IAM operates as their fundamental security layer. Organizations that implement AI into their operations need IAM to maintain complete visibility of all activities, decisions, and resulting outputs, which must remain authorized and accountable. The lack of IAM functionality allows AI agents to execute actions beyond their defined limits, resulting in security threats to protected environments.

Authentication: Who or What Is the AI Acting As?

- Digital identification becomes possible through authentication, which AI systems need to operate.

- Security teams can identify specific AI agents, tools, and services through their unique identities, revealing their operational entities in the environment.

- AI should use cryptographic authentication methods that provide better protection against credential theft instead of shared secrets or API keys.

- Identity federation enables AI agents to securely access third-party tools and services with the help of authentication without the need to store any credentials.

Authorization: What Is the AI Allowed to Do?

- The process of authorization defines the boundaries determining which operations an AI system can perform.

- Organizations need to create access controls that assign permissions based on roles and policies to define what AI agents can do.

- Assign specific permissions to manage tool access, controlling both operations and data accessibility.

- Implement context-aware access rules that adjust permissions based on time settings, user location, and workload for enhanced protection.

Least Privilege for AI Workflows

- The principle of least privilege requires implementation to stop AI agents from getting access permissions that exceed their required authorization level.

- The system allows users to access only the required permissions, which enable them to execute their assigned duties.

- The system should grant limited access, which automatically becomes invalid after a specific time period, to reduce the attackable areas.

- Users must perform additional authentication procedures for critical system functions, which include data export and configuration modification, before accessing these operations to confirm their intended actions.

Policy Enforcement Beyond the Prompt

- IAM policies define security boundaries that prompts alone cannot enforce.

- Prompts guide how an AI behaves, but IAM policies define what actions it must avoid.

- To prevent unauthorized behavior and unexpected outcomes across integrated systems, IAM policies separate decision-making rights for reasoning functions from execution rights for actions.

Audit, Visibility & Accountability

- Accountability builds trust in AI operations.

- All AI activities must link to authenticated identities, whether human or machine.

- Track all tool, API, and service interactions to support investigations and create reliable audit trails.

- Record events and set up alerts to detect unauthorized access early, allowing organizations to stop breaches before they cause significant damage.

IAM for AI: What Modern Platforms Must Support

Identity Lifecycle Management for AI Agents

Current IAM platforms need to authenticate both human users and AI entities, including agents, models, and scripts that access enterprise resources. A systematic approach must exist to manage AI identity creation, rotation, and revocation processes at the same level as human account management. Each model instance and API-driven agent receives separate authentication credentials, which defends against lateral movement attacks. Secure environments will achieve their future goals through the simultaneous management of human and non-human identities, requiring complete action transparency.

Fine-Grained Access Control for AI Tools & APIs

AI platforms require API access to sensitive data, which drives organizations to create secure access controls. IAM solutions should grant permissions with specific scopes for both agents and tasks while restricting access to only required resources. Conditional policies must adjust their operations according to risk levels, using additional authentication for AI processing when accessing protected data sets. This approach prevents privilege abuse while keeping AI workloads fully agile.

Integration with DevSecOps & AI Pipelines

The core function of IAM involves integration with AI development to manage traditional software pipelines through CI/CD workflows. Developers need to implement identity validation and access policies throughout all phases of model training, testing, and deployment. Strong security measures protect MCP servers and AI plugins from threats, enabling quick innovation delivery and maintaining regulatory standards. Identity-aware code promotion ensures that your models remain trustworthy from the initial commit to production deployment.

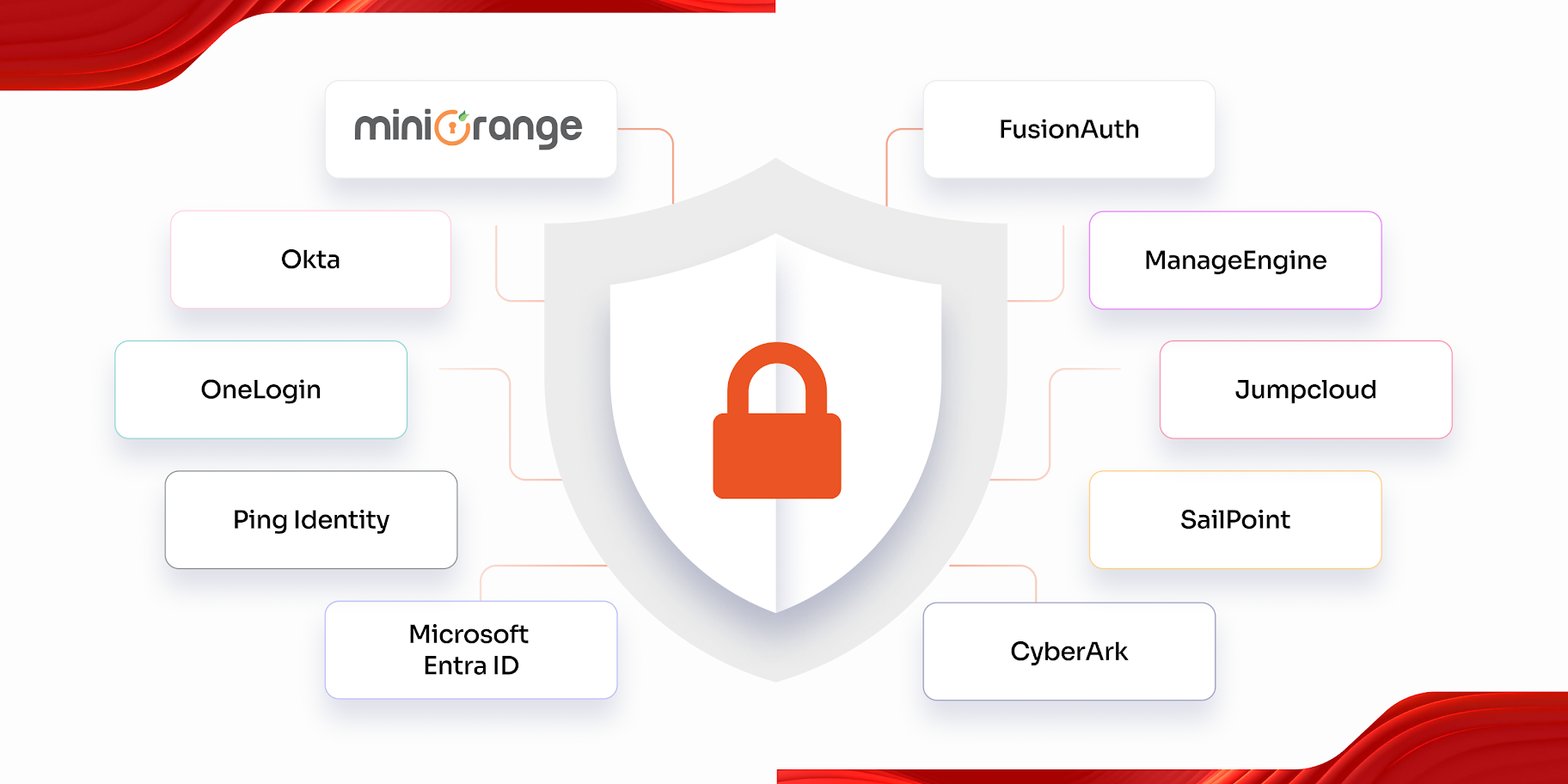

Where IAM Platforms Like miniOrange Fit

The miniOrange platform provides centralized governance to help organizations manage human, AI, and service identities within a single framework. It delivers immediate access control as per the policies for APIs, agents, and services while maintaining complete audit logs and generating automated compliance reports. miniOrange empowers organizations to stay compliant with regulations while protecting their AI development work from disruption, achieving an ideal balance between operational control and innovative freedom in contemporary digital environments.

AI Security & Governance: What Comes Next

From AppSec to Identity-Centric AI Security

AI security now centers on identity, not perimeter defense. Firewalls can’t stop identity-based attacks, but verified users and agents can. Every AI action must tie to an authenticated and authorized entity. This identity-first design blocks manipulation and ensures accountability across humans, models, and APIs.

Regulatory & Compliance Pressure on AI Systems

The EU AI Act and similar new laws require full transparency and accountability in the function of AI and its operation within your organization. After these laws were passed, companies now must track who trained, deployed, and used each model to enable backtracking. Identity governance provides the audit trail regulators expect and anchors decision accountability throughout the AI lifecycle.

Zero Trust for AI

Zero Trust now applies to AI itself. No model or agent gets implicit trust. Security teams continuously verify identity, intent, and action. Every request passes policy checks, keeping AI behavior controlled, explainable, and secure.

Best Practices: Securing AI Systems Against Prompt Injection

AI provides operational improvements through its capabilities yet generates security weaknesses that organizations need to handle. The security vulnerability of language models makes them susceptible to prompt injection attacks because attackers can use their interpretation abilities to produce unauthorized results. AI security development requires more than improved models because it needs complete security approaches that guide AI development from start to finish.

Design Principles

AI security needs developers to build through deliberate design methods for establishing its fundamental base. Architectural principles allow you to build trust and resilience directly into your design process.

- Treat AI agents as identities. View each AI agent as a distinct identity within your environment, similar to users or applications. This allows you to integrate AI into your existing IAM framework instead of running it as an uncontrolled process.

- Keep separate the logical decision-making steps from the actual performance of operations. AI needs periodic maintenance of its decision-making abilities while it remains inactive from performing any physical operations. The separation of reasoning functions from execution capabilities protects from executing harmful or accidental commands that could result from malicious activities.

- Protect from attacks that could compromise prompts. All operations need to be conducted under the assumption that attackers can modify both the data entered and all environmental factors. Include validation, content filtering and sanitation layers, which will stop abuse from happening before the model performs any action.

Implementation Checklist

Engineers need complete authority over their work to transform design concepts into operational approaches that will operate correctly. The following checklist helps you improve your security position:

- Give each AI agent and tool its own individual identity for the process to work. All agents and tools need to register with identity credentials, which will enable traceability and control of their actions.

- Require implementation of least privilege access as a security measure. Limit what each AI component can do strictly to its operational need, no more, no less.

- Provide credentials that expire quickly while restricting their usage to specific areas. Provide short-term temporary credentials because their short expiration period will minimize the time attackers can access stolen credentials.

- Apply policy-based authorization. Require particular policy definitions, which use IAM policies or authorization layers to specify AI operational tasks for uniform enforcement.

- Log and monitor AI actions. Track all activities and user selections through complete logging while anomaly detection technology searches for abnormal behavior in real time.

Organizational Readiness

AI security needs solutions that extend past the deployment of technological approaches. People and processes must evolve alongside it. Develop readiness through both practice and policy implementation.

- Provide AI threat model training to security personnel. The defensive team needs to learn about three essential attack methods, which include prompt injection vectors, model manipulation tactics and AI vulnerabilities.

- The evaluation process for AI architecture requires IAM to function as its essential foundation. The design process requires identity experts to join at an early stage for verifying that access control, credential flows and policy enforcement comply with enterprise standards.

- Establish AI governance frameworks. Develop frameworks that will handle model lifecycle management and data usage policy compliance and scheduled security best practice verification through audits.

Your security ecosystem requires AI to achieve first-class citizen status because this approach defends against prompt injection attacks and preserves operational trust. Organizations that implement these best practices will protect AI while achieving better results in responsible and resilient innovation.

Final Takeaway: Secure AI Starts with Identity

Prompt injection attacks prove that AI can be fooled, but you should never fool people. You can't only make AI models better anymore; you also have to keep an eye on who uses them and how.

Identity and Access Management (IAM) supplies the missing enforcement layer by setting trust boundaries and validating every request before it reaches your AI bots.

Companies protect the identities of people, applications, and computers to make sure that AI systems are strong and reliable. Companies that have strong IAM controls today will be able to securely and confidently grow AI breakthroughs in the future.

FAQs

What are prompt injection attacks in AI systems?

The attack method of prompt injection attacks embeds harmful commands into AI input data, which causes the system to execute attacker-defined operations instead of performing its original function. The system faces three types of attacks, which let attackers evade security measures to access data through incorrect tool and API usage.

How is prompt injection different from traditional injection attacks?

The security of traditional injection attacks depends on structured code and queries, which maintain separate areas for code and data, thus enabling parameterized queries to work effectively. The prompt injection method requires natural language input because attackers must enter their instructions and data through a single stream, which makes it difficult to eliminate all signs of the attack.

Can IAM really prevent prompt injection attacks?

IAM systems become vulnerable to prompt injection attacks because their security weakness occurs when models process incoming input commands in a specific way. The system reduces system exposure through its ability to control AI identity and agent access to systems and data and tools while implementing least privilege access and authentication, and auditing measures.

What is IAM for AI and non-human identities?

IAM for AI and non-human identities grants first-class identity status to all agents, service accounts, tools, workloads, and MCP components. Each entity receives its own set of credentials, roles, policies, and lifecycle management processes, which include onboarding, rotation, deprovisioning, and audit trail recording, rather than relying on shared or hard-coded accounts.

How do AI agents and MCP servers increase security risk?

AI agents possess the ability to perform tool chaining and API calling and browsing operations, which enables successful prompt injection to execute actual system actions instead of producing harmful text. The MCP servers create additional security risks because they combine multiple tools and back-end systems into a single access point, which allows attackers to use a single unauthorized agent-MCP path to access sensitive data and move between systems.

Leave a Comment