AI agents are already inside most enterprise environments. They complete tasks, connect to live systems, and make decisions that used to require a human. Gartner projects that 40% of enterprise applications will include task-specific AI agents by the end of 2026, up from less than 5% today. What was an experiment two years ago is now a core part of how work gets done.

If your organization is adopting AI agents or planning to, security is not something you can figure out later. In this blog, we cover the six biggest AI security risks enterprises will face in 2026 and the steps you can take to address them before they turn into security incidents.

Why AI Agents are Gaining Popularity

The appeal of AI agents is easy to understand. Most enterprises do not have a shortage of software. They lack smooth execution.

Teams lose time every day to:

- Moving information between tools

- Checking multiple systems before taking action

- Routing requests to the right owner

- Following up on repetitive work that should already be automated

AI agents promise to reduce that friction. Instead of waiting for a person to coordinate every step, an agent can retrieve context, call a tool, update a system, and hand off the task with less delay.

That is why adoption is accelerating across functions:

- Support teams use agents to triage and respond faster

- Internal ops teams use them to move requests across workflows

- Technical teams use them to enrich tickets, search logs, and assist investigations

- Business teams use them to summarize information and trigger next steps

Agents are becoming part of how work gets done, not just part of how people search for answers.

How AI Agents Create a New Security Surface

A lot of people treat AI agents like smarter chatbots. They are not. A chatbot sits inside a conversation and waits for your next message. However, an agent lives inside your workflow and keeps moving on its own.

AI agents expand the attack surface because they combine three things in one system:

- Language understanding

- Tool access

- Autonomy

On their own, each of those is manageable. Together, they create new failure modes.

For example:

- A model can misunderstand a prompt

- A tool can accept unsafe parameters

- An autonomous workflow can keep going without a human stopping it

None of these failures requires a sophisticated attack. A poorly written prompt or a misconfigured tool is enough to set things in motion. That is what makes this security surface different from anything enterprises have dealt with before.

The 6 Biggest AI Agent Security Risks in 2026 (and How to Control Them)

These six risks keep showing up because they follow the way enterprises actually build and connect agents. They start as small design choices and then become meaningful exposure once the agent is live.

1. Over-Privileged AI Agents

Many organizations start by giving agents broad access. Why not? If a customer support agent can read tickets, edit cases, and even export data, it feels powerful and fast. The trouble is that permission sets created for convenience often stay in place long after the agent moves into production.

In practice, this can mean:

- An agent that technically only needs to retrieve case history can also change customer records.

- A finance agent who can summarize reports may also be able to download raw datasets.

This is one of the most common AI Agent vulnerabilities. The problem is not that the agent behaves badly on its own. The problem is that it already has too much power. If a prompt is manipulated, a rule is misconfigured, or a memory note is misread, the agent can cause damage without “escalating” anything. Its permissions are already high enough.

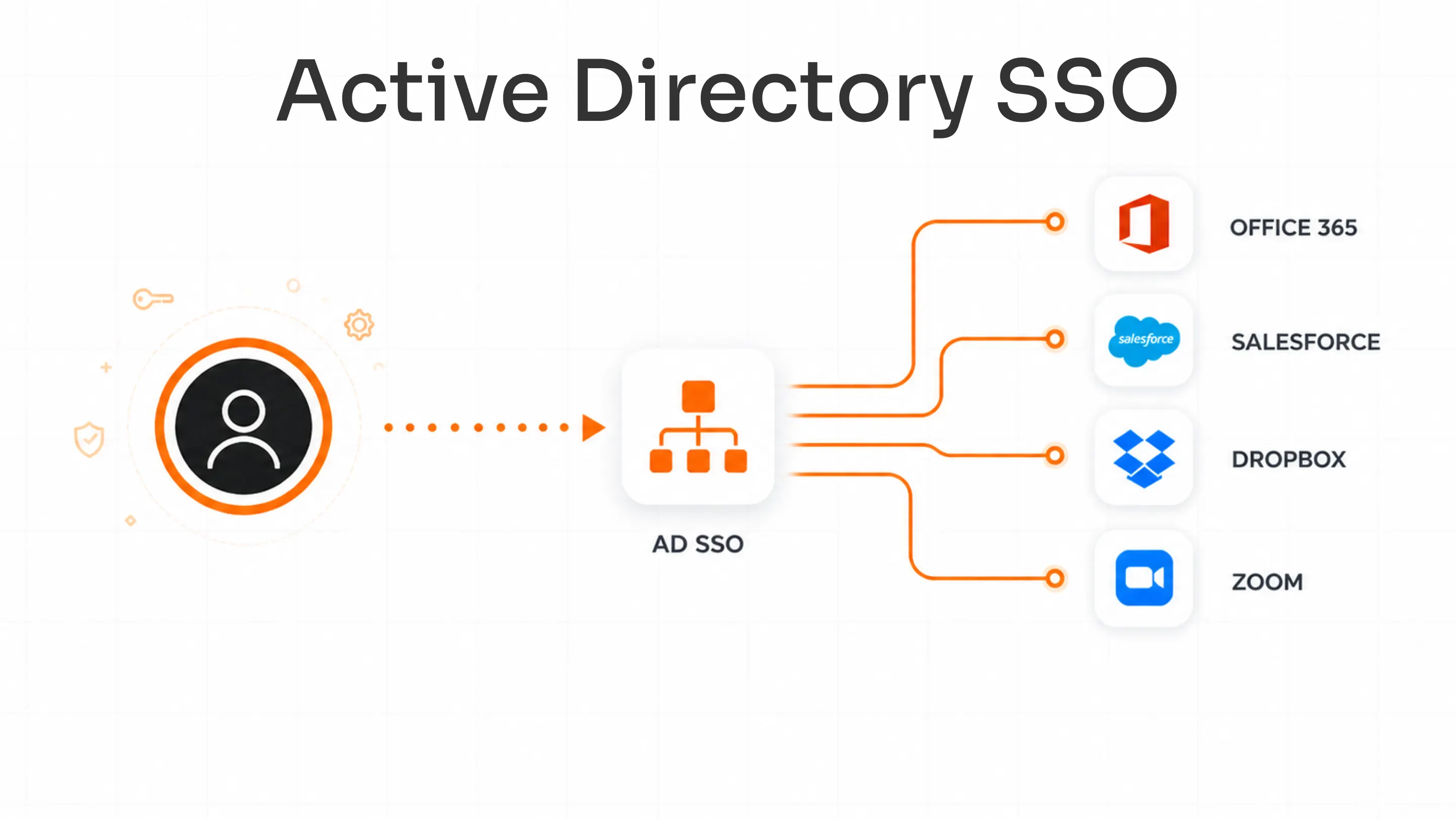

Solution: Least-privilege IAM is the most direct control here. It means every agent gets the minimum permissions needed for its specific task and nothing more. Strong AI agent authentication also plays a critical role by ensuring agents can securely verify their identity before accessing systems, APIs, or sensitive data.

This involves:

- Limiting agents to only the systems and actions they truly require

- Separating read, write, export, and administrative privileges

- Reviewing and reducing permissions as workflows evolve

- Preventing agents from inheriting unnecessary access over time

For example, a support agent can read and update tickets without being allowed to export customer data. Or a sales agent can summarize pipeline information without being able to change ownership or pricing. That shift in access design greatly reduces the blast radius if something ever goes wrong while keeping the agent productive.

2. Static or Hardcoded Credentials

In early deployments, it is common to see agents using long-lived API keys, shared service accounts, or secrets embedded directly in configuration files. These patterns are easy to implement. But they are also hard to manage at scale.

The problem shows up when:

- Credentials are rarely rotated

- Multiple agents reuse the same token

- No one is clearly responsible for the credential lifecycle

- Exposed secrets remain active for too long

This weakens accountability. When something suspicious happens, it is difficult to tell which agent, workflow, or integration triggered the behavior. The agent may look like a small, isolated component, but its identity is still shared through reused credentials and long-lived tokens.

Solution: Secrets management helps replace those scattered, static secrets with a more controlled, centralized approach.

In practice, this covers:

- Storing credentials in dedicated vaults instead of configuration files

- Rotating secrets automatically on a defined schedule

- Using short-lived tokens instead of permanent API keys

- Assigning unique identities and credentials to each agent

Secrets are stored in dedicated vaults, rotated automatically, and bound to short-lived tokens instead of permanent keys. This makes it much harder for an exposed credential to become a long-term access path. It also improves auditability because each agent can have its own distinct, managed identity instead of hiding behind a shared token.

3. Limited Visibility and Logging

Many teams only record the final outcome of an agent’s work: a ticket created, a report shared, or a workflow advanced. They often miss the full chain of steps that got them there.

An agent can:

- Receive a user prompt

- Read internal documents or tickets

- Call multiple tools

- Write to memory

- Trigger downstream actions

If those steps are not logged clearly, the agent environment turns into a black box. When something goes wrong, incident response teams can see that an agent took an unexpected action, but they cannot see what it read, which tool it called, or how it reached that decision.

Solution: Observability/Logging is the way to open that black box. In a Zero Trust AI environment, you do not assume the agent is safe just because it is running. You need to verify every action through logs, and you need to treat every agent execution as something that must be traced.

That means capturing a full trace of every agent execution:

- The initial prompt and context

- The tools it called and the parameters it used

- The memory or state changes

- The final output and downstream actions

When you make enterprise AI security truly operational, observability becomes the first line of defense against mysterious behaviors and unexplained incidents.

4. Prompt Injection Attack Exposure

A prompt injection attack is one of the most important LLM security risks because it targets the agent’s reasoning, not its infrastructure. It happens when an agent is manipulated into following instructions it should ignore.

That can occur through:

- A direct user input (a malicious prompt)

- A poisoned document loaded into the agent’s context

- A ticket, webpage, or email with hidden instructions

- Indirect content that an agent retrieves and treats as “trusted”

The danger is that an agent may not realize it has been tricked. It can follow the hostile instructions, ignore its own rules, misuse connected tools, or expose data, all while appearing to behave normally.

Solution: Input sanitization is the key control here. It means treating external content as untrusted until it has been checked, filtered, or classified. Instead of treating every piece of retrieved text as neutral, the system applies guardrails that:

- Inspect for manipulative patterns

- Isolate or strip out dangerous instructions

- Separate “context” from “control” before the agent can act

With effective input sanitization, prompt injection does not turn into a full-blown security incident.

5. Insecure Tool or Plugin Integrations

AI agents become powerful when they connect to tools. That same power becomes a risk when those integrations are weak. Every API, plugin, browser action, or external connector is another place where validation, permissions, and trust can break down.

Common issues include:

- Poor validation of what the agent sends to the tool

- Broad permissions that let the agent do more than it should

- Third-party tools or plugins added without review

- Complex chains of tools that security teams do not fully understand

A security assistant that can query logs, run scripts, and open tickets may seem useful until an unsafe script or misconfigured tool becomes a hidden execution path.

Solution: Vendor vetting helps by forcing a security and compatibility check before any new tool or plugin becomes part of a production workflow.

This means:

- Asking what permissions the integration requires

- Checking how it validates input parameters

- Reviewing how it handles failures and edge cases

- Limiting the agent’s ability to misuse it

When agentic AI security is treated this way, tools add value without quietly expanding the attack surface.

6. Data Exfiltration via Agent Outputs

Some of the most dangerous leaks do not look like breaches. An agent may return a response that reads smoothly but includes sensitive context, internal notes, or confidential data the user was never meant to receive.

This usually happens when:

- Retrieval pulls in more information than needed

- No output layer checks what is being exposed

- Memory reuses private context in later responses

- The user-facing response is not filtered for sensitivity

The result is that an agent can leak information without triggering obvious alarms. It may feel like good service, but it can still expose contracts, compensation details, or operational plans.

Solution: Output filtering is the final line of defense. It means the agent’s response is checked for restricted fields, confidential data, and excessive context before it leaves the system.

This can include:

- Masking or redacting specific identifiers

- Removing or truncating sensitive segments

- Enforcing strict rules on what can be revealed in the answer

It is especially important in environments where the agent is connected to HR, finance, compliance, or customer data. The model may not know what level of detail is acceptable. The output filter does. That extra layer of control can turn a risky response into a safe, compliant one.

The 2026 Enterprise AI Adoption Gap: Speed vs. Security

Gravitee surveyed more than 900 executives and technical practitioners and found a telling contrast. 80.9% of technical teams have already pushed AI agents into active testing and production. Only 14.4% say those agents go live with full security or IT approval. So, where is the oversight for the rest?

Speed is winning right now. Business teams want agents to go live fast, and the pressure to move quickly is real. Security, by nature, asks teams to slow down, verify, and document. That tension is not new, but with AI agents, it carries more weight. These systems connect to live data, trigger actions across tools, and operate with permissions that affect real business processes.

This is where an effective AI governance framework and strong AI agent compliance practices become critical. Enterprises need clear policies around agent access, authentication, data handling, auditability, and human oversight before deployment begins. Without governance, AI adoption can quickly create security blind spots that spread across systems and workflows.

The organizations that are finding the right balance are not choosing between the two. They are making governance decisions before deployment rather than after. The risks and solutions covered in this blog give you a practical foundation for doing exactly that. Understanding where exposure sits is the first step toward managing it without losing momentum.

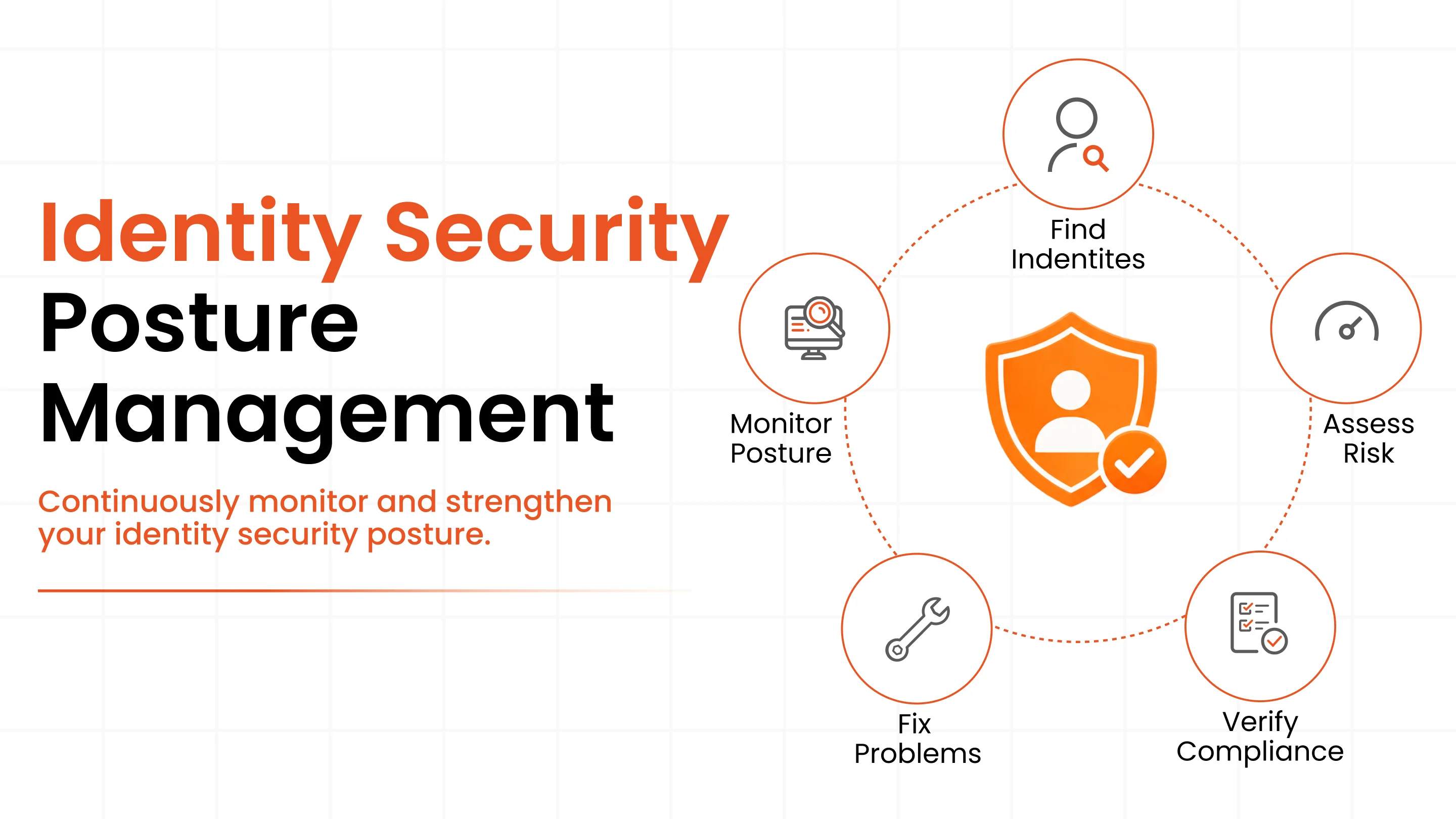

Securing Autonomous AI Agents with IAM

The organizations that benefit most from AI agents will not simply be the fastest adopters. They will be the ones that make agent behavior visible, accountable, and safe enough to scale. That starts with getting the fundamentals right: strong Identity and Access Management (IAM) so every agent operates under its own verified identity with only the access it actually needs. Clear audit trails so you always know what an agent did and why. And runtime controls that catch problems before they become incidents.

When those foundations are in place, AI agent security stops feeling like a blocker and starts becoming part of what makes automation trustworthy in the first place.

Frequently Asked Questions

What are the biggest security risks of AI agents in enterprise environments?

The biggest security risks include over-privileged access, weak credentials, prompt injection attacks, unsafe tool integrations, poor visibility, and sensitive data exposure.

How do AI agents differ from traditional software in terms of security?

Traditional software follows fixed rules and behaves predictably. AI agents read context, understand goals, and make decisions across tools and data in real time, which makes protecting them much more important.

What is prompt injection, and why does it matter for AI agents?

Prompt injection happens when someone hides harmful instructions inside content an AI agent reads. The agent treats it as valid input and may expose data or perform unintended actions.

How should enterprises govern AI agent permissions in 2026?

Without proper access controls, AI agents can become major security risks. Enterprises should give agents only the permissions they need and regularly review their access.

What compliance frameworks apply to AI agent deployments?

Compliance requirements vary by industry and region, but most frameworks expect clear audit logs, secure data handling, defined approvals, and human oversight for AI agent activity.

Leave a Comment