AI agents are changing the security model in a way many teams are still underestimating.

LLMs, copilots, autonomous workflows, and agentic tools do not behave like traditional software. They make decisions, chain actions, and call multiple systems on their own, often at machine speed.

Despite this shift, many implementations still rely on API keys as the primary method of authentication. According to a Cloud Security Alliance report, 44% of organizations still authenticate AI agents with static API keys.

This approach worked for predictable request-response applications, but for autonomous agents operating across workflows, reusing credentials, and making independent calls at scale, it starts to break down.

This is the foundation of IAM vs API Keys. For modern AI systems, identity-based access for AI agents is not an enhancement; it is a requirement. IAM provides the structure needed to securely manage AI-driven interactions, making it essential for any organization building or scaling intelligent, autonomous systems.

What Are API Keys and Why They Fall Short for AI Agents

API keys are a basic authentication mechanism built around a shared secret. A client includes the key in a request, and the system grants access if the key is valid. Their simplicity and low implementation overhead made them the default choice for early APIs.

However, this model assumes predictable usage patterns. AI agents break that assumption. They operate across workflows, call multiple services, and act independently, which exposes the risks of using API keys in AI systems.

At a fundamental level, API keys are not identity-aware. They validate access but do not answer who or what is making the request. This creates a critical visibility gap, especially in systems where agents act on behalf of users or across multiple contexts.

Common API key-related security risks include:

- Static credentials offer no identity context, so it is hard to tell who or what is making the call.

- No fine-grained authorization, so access often becomes all-or-nothing.

- Manual rotation and revocation increase vulnerability as the number of agents grows.

- Weak traceability, which makes incident response and audit work harder.

- High exposure risk through logs, repositories, or client-side usage

These issues intensify in AI environments. Agents often reuse the same keys across tasks, increasing the blast radius of a compromise. Prompt injection attacks can manipulate agents into exposing or misusing credentials, since the agent itself may have direct access to the key.

The result is a system with limited traceability and weak control boundaries. This highlights why API keys are not secure for AI agents. Static credentials were not designed for autonomous execution and do not provide the control required for modern AI-driven workflows.

What Is Identity-Based Access (IAM) in AI Systems

IAM was designed to answer a simple question: who gets access to what. That question becomes harder to answer when the “who” is no longer a person, but an autonomous system making decisions on its own.

This is already a scale problem. Non-human identities, including service accounts and applications, now outnumber human users in most enterprise environments, yet they are often less governed and more over-permissioned. AI agents fit directly into this category, but operate with even more independence. This is already shaping how organizations think about identity.

What Experts Say:

“The organizations that lead in 2026 will be those that build identity into the DNA of AI, creating systems that are not only intelligent, but inherently trustworthy.”

— Ellen Boehm, SVP of IoT and AI Identity Innovation at Keyfactor

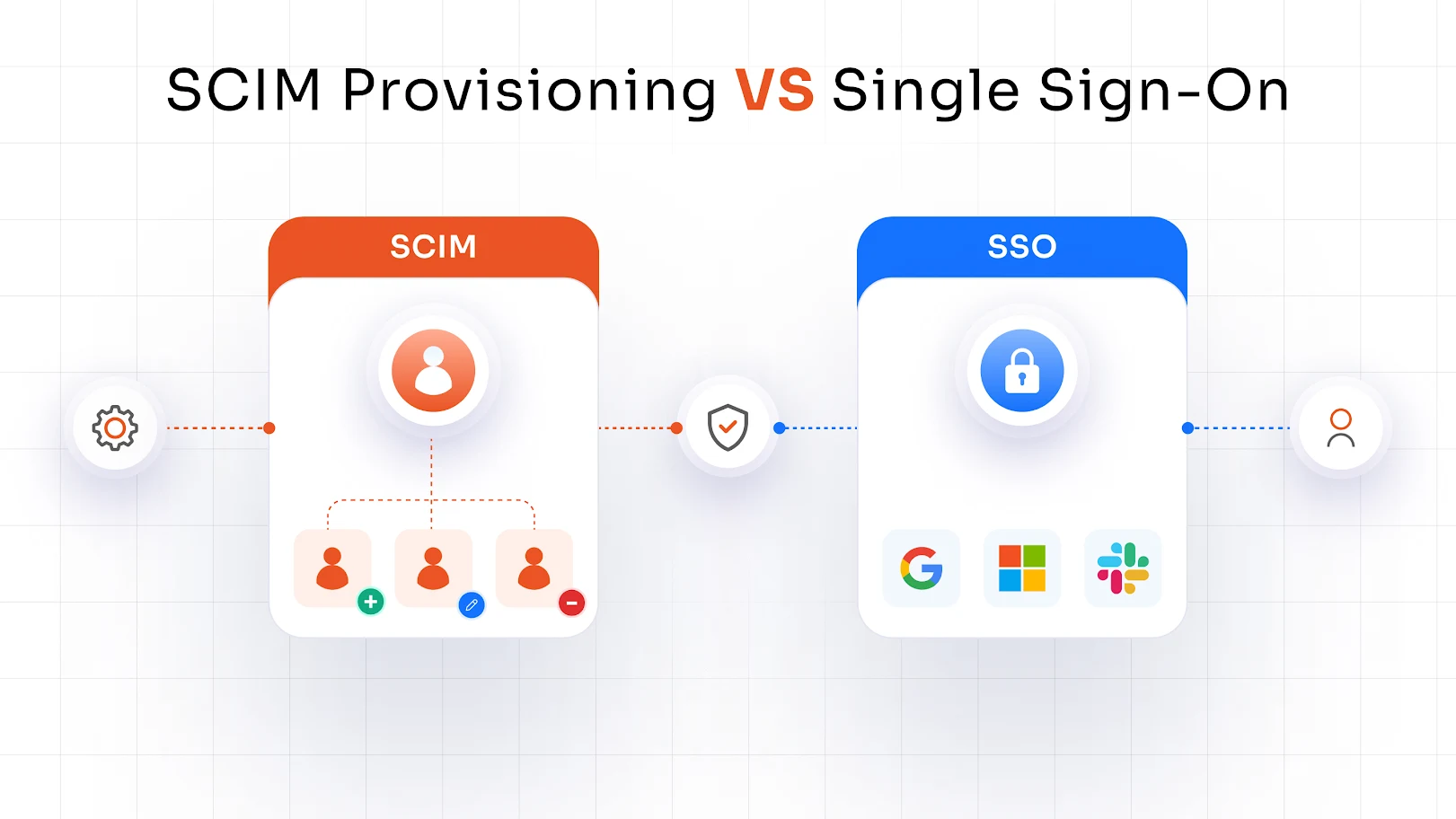

IAM for AI agents shifts the model from shared access to individual accountability. Each agent is assigned a distinct machine identity, allowing every action to be tied back to a specific entity rather than a reused credential.

The enforcement layer relies on identity-first mechanisms:

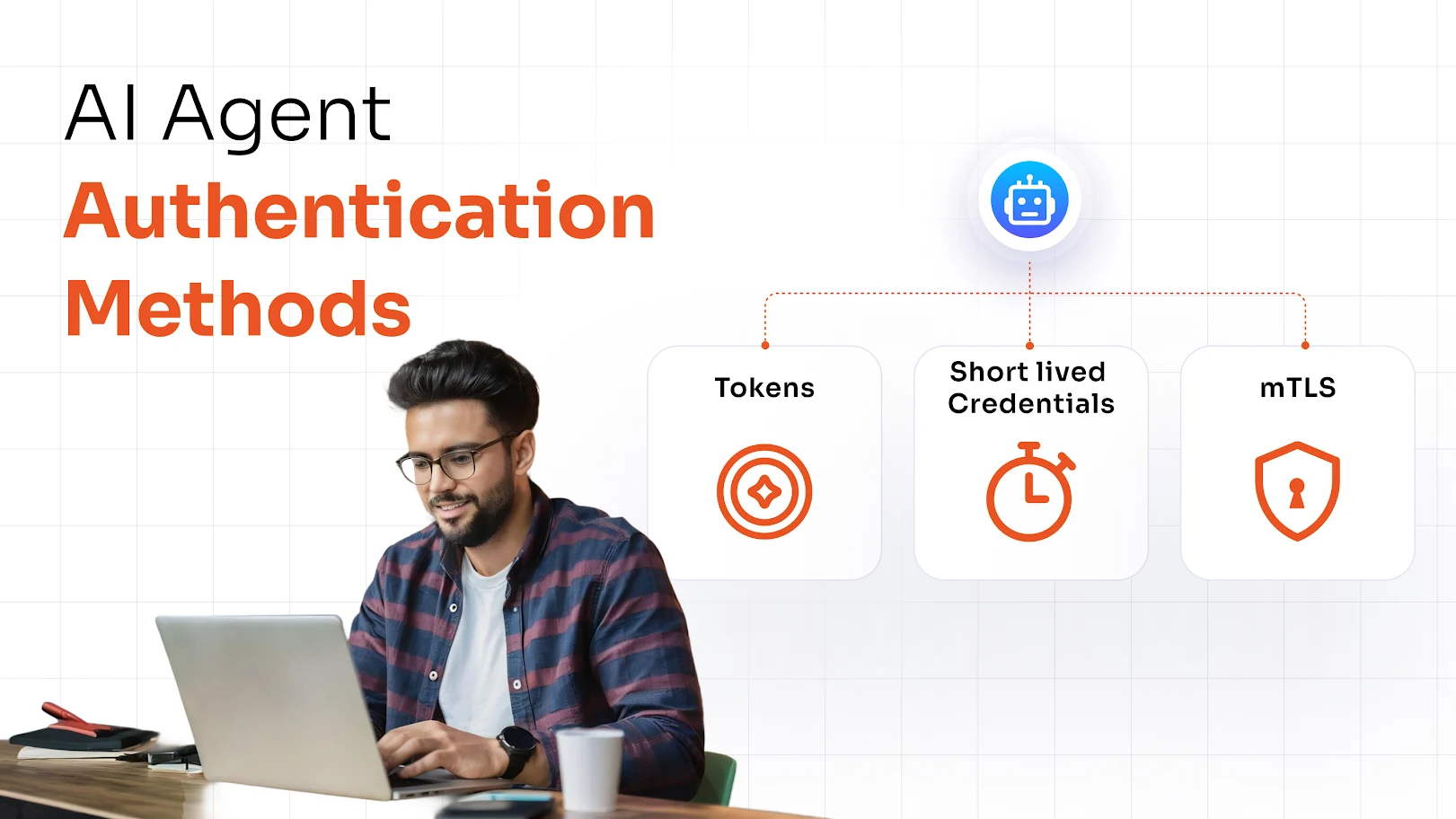

- OAuth for AI enables agents to request delegated, scoped access instead of exposing credentials

- Token-based authentication replaces static keys with short-lived tokens

- Role-Based Access Control (RBAC) defines what an agent is allowed to do

- Attribute-Based Access Control (ABAC) adjusts access dynamically based on context

In practice, this means every AI agent operates with:

- A unique, traceable identity

- Scoped permissions tied to specific tasks

- Time-bound access that limits exposure

The difference is structural. API keys grant access. IAM defines identity, enforces boundaries, and continuously evaluates behavior. For AI systems operating across services and workflows, that distinction is what makes secure execution possible.

IAM vs API Keys — A Security Comparison

| Feature | API Keys | IAM (Identity-Based Access) | Context in AI Environments |

|---|---|---|---|

| Identity Awareness | None | Strong (user/agent identity) | API keys may work for simple, single-service integrations. In AI systems with multiple agents, lack of identity makes attribution and accountability difficult. |

| Access Control | Static | Granular & dynamic | Static access is manageable for fixed workflows. AI agents require permissions that adapt based on task, context, and data sensitivity. |

| Credential Type | Long-lived | Short-lived tokens | Long-lived keys reduce overhead in stable systems. In AI environments, they increase exposure due to continuous, autonomous execution. |

| Auditability | Limited | Full traceability | API keys provide minimal logging context. AI workflows require detailed tracking of which agent performed each action across systems. |

| Rotation | Manual | Automated | Manual rotation is feasible at small scales. With multiple AI agents and integrations, it becomes operationally inefficient and error-prone. |

| Zero Trust Ready | No | Yes | API keys assume implicit trust once issued. AI systems require continuous verification of identity and context for every request. |

This IAM vs API keys comparison highlights a fundamental shift.

API keys operate as static credentials that validate access at a surface level.

IAM introduces context, defining not just whether access is allowed, but who is requesting it, what they can do, when they can do it, and under what conditions.

In simple terms: API keys provide access. IAM defines who + what + when + why.

Why AI Agents Demand Identity-Based Security

AI agents used to respond. Now they initiate.

We’re moving into an era where these agents are no longer passive tools limited to assisting humans; they act on behalf of users, systems, and other agents. They can execute workflows, complete transactions, and make decisions with minimal intervention, all at machine speed.

That shift creates a mismatch. Traditional identity and access management frameworks were designed for predictable, user-driven interactions, not autonomous systems operating at scale. Static credentials cannot keep up with this level of independence, which is why AI agent security needs to be anchored in identity, not just access.

1. Autonomous decision-making requires accountability AI agents do not just retrieve data; they trigger actions. Without a unique, verifiable identity, there is no reliable way to attribute those actions. Shared credentials like API keys collapse accountability, making it difficult to answer a basic question: which agent performed what action, and why?

2. Dynamic workflows need dynamic access Agent-driven workflows are not static. Permissions may need to change based on task, context, or data sensitivity. Static credentials cannot adapt to these conditions. Identity-based access introduces scoped, time-bound permissions that align access with real-time requirements.

3. Increased attack surface AI agents routinely interact with multiple APIs, external tools, and internal systems. Each integration expands the attack surface. If a single credential is reused across these touchpoints, it becomes a high-value target. Compromise in one area can cascade across the entire workflow.

4. Zero Trust is no longer optional The principle behind Zero Trust AI is straightforward: no entity is inherently trusted. Every request must be continuously verified based on identity, context, and policy. This is particularly relevant for AI agents, where implicit trust in long-lived credentials introduces systemic risk.

5. Compliance and governance requirements Frameworks like SOC 2 and GDPR require traceability, least privilege access, and enforceable controls. Identity-based systems provide audit logs, policy enforcement, and access boundaries that align with these requirements, while static credentials fall short in delivering verifiable governance.

Common Security Risks When Using API Keys for AI Agents

API keys were built for straightforward, server-to-server authentication. That model starts to break when applied to autonomous systems.

To put it simply, API key vulnerabilities in AI environments are structural, not incidental.

Key leakage in logs / GitHub API keys frequently end up in logs, client-side code, or public repositories like GitHub. Once exposed, they can be reused without restriction, especially when there is no binding to a specific identity or context.

Over-permissioned access Most API keys grant broad, all-or-nothing access. In AI workflows, where agents interact with multiple services, this creates unnecessary privilege expansion. A single compromised key can unlock multiple systems.

No session control API keys do not support session management. There is no concept of session expiry, re-authentication, or contextual validation, which makes continuous control over agent activity impossible.

No user-agent mapping When multiple agents reuse the same key, actions cannot be traced back to a specific source. This breaks accountability and makes auditing unreliable.

Difficulty in revocation Rotating or revoking API keys is typically manual. In active AI systems, this can interrupt workflows or require widespread updates across integrations.

Where this breaks in real AI systems

AI copilots integrated into internal systems (for example, assistants querying company databases or triggering backend workflows) often rely on a single API key to access multiple internal endpoints. If that key is exposed, it can be reused to query sensitive data or execute actions across systems without any user-level verification.

In another scenario, LLM-powered integrations that call third-party APIs—such as tools that fetch data, send emails, or trigger transactions—can be manipulated through prompt injection. An attacker can influence the model’s input to make unintended API calls using the same static key, effectively abusing trusted integrations without needing direct access to the credential itself.

How IAM Secures AI Agents

To secure AI agents with IAM, organizations need to move away from static credentials and toward identity-driven access control. Instead of treating agents as anonymous callers, IAM assigns identity, enforces policy, and validates every interaction.

Core Capabilities

- Unique Agent Identities (Machine Identity) Each agent is assigned a distinct identity, enabling traceability and controlled access. This ensures every action can be attributed to a specific agent.

- OAuth 2.0 / OpenID Connect-based Authentication (OAuth AI security) Standards like OAuth 2.0 and OpenID Connect enable token-based authentication instead of static API keys. OAuth AI security involves using these protocols to issue scoped, identity-linked tokens that reflect what an agent is allowed to do, not just whether it can connect.

- Short-lived Access Tokens Access is granted through time-bound tokens that are limited to specific actions. This reduces exposure and prevents long-term misuse.

- Fine-grained Access Control (RBAC / ABAC) Permissions are defined using roles and attributes, allowing access to adapt based on task, context, or data sensitivity.

- Continuous Verification (Zero Trust) Every request is validated in real time based on identity and context, ensuring access is not implicitly trusted.

- Audit Logs and Monitoring All actions are recorded with identity context, enabling full traceability and compliance enforcement.

How the Access Flow Works

1. Agent requests access to a resource or API

2. IAM verifies identity using OAuth/OpenID-based authentication

3. A scoped, short-lived token is issued based on defined policies

4. Access is granted within those policy constraints

Implementing IAM for AI Agents with miniOrange

When AI agents start executing workflows instead of just responding to prompts, access control becomes a system-level concern.

Static credentials don’t provide a reliable way to assign identity, enforce permissions, or track what each agent is doing across systems. IAM addresses this by introducing identity, policy, and traceability at the agent level.

By implementing solutions like miniOrange IAM for AI security, this model can be applied directly to AI environments, enabling organizations to manage how agents authenticate, what they can access, and how those actions are governed across APIs and enterprise systems.

Key Features

- Centralized Identity Management Manage users, applications, and AI agents within a single identity layer, ensuring consistent policy enforcement and eliminating siloed access control.

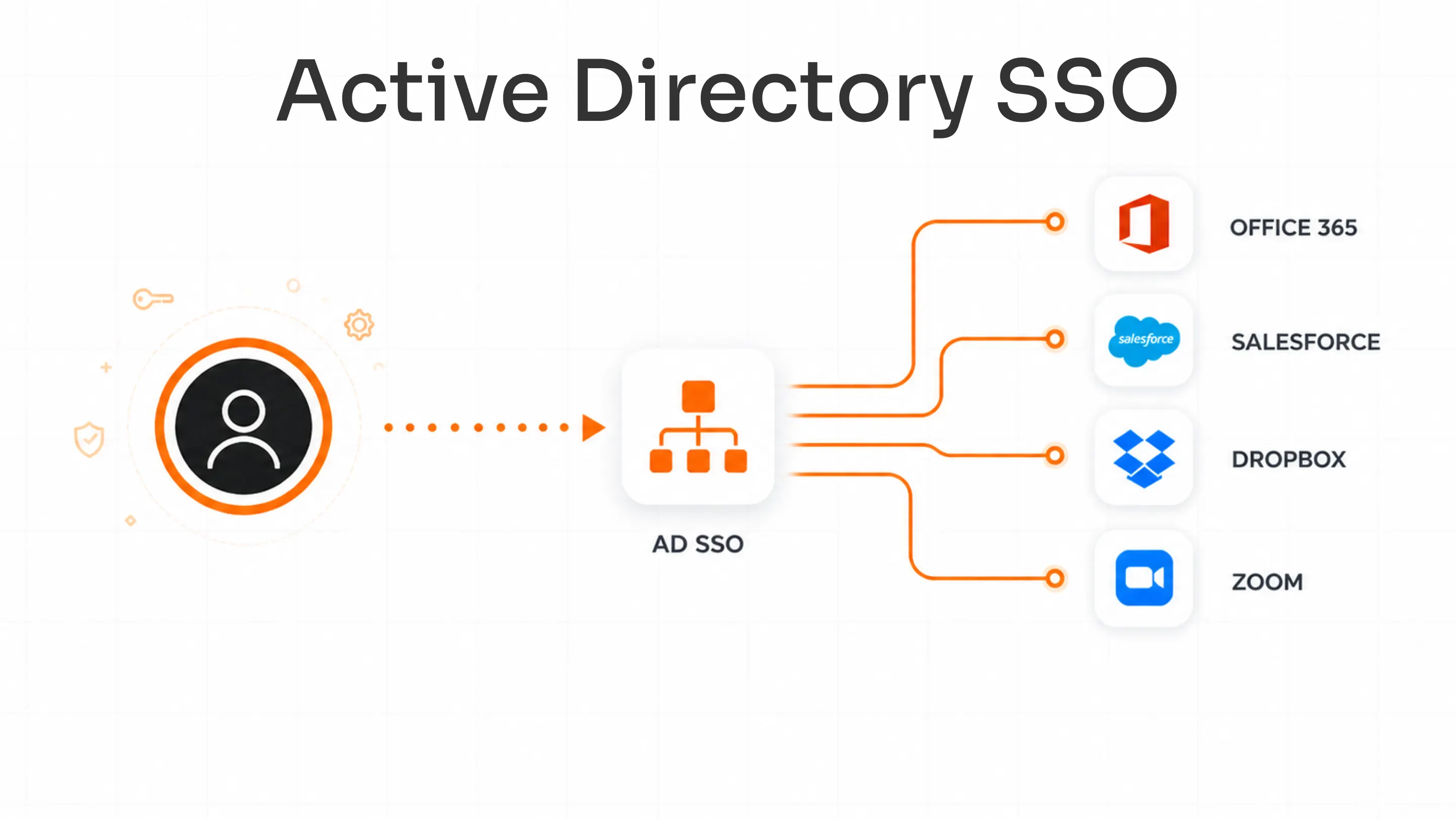

- OAuth / SSO Integration Integrate with existing identity providers and enable token-based authentication using standards like OAuth 2.0 and OpenID Connect, replacing static API keys with identity-aware access.

- API Access Control Enforce access policies at the API level based on agent identity, role, and context, preventing over-permissioned access and reducing exposure across services.

- Machine Identity Management Treat AI agents, bots, and services as first-class identities with defined ownership, lifecycle management, and permission boundaries.

- Adaptive Authentication Apply context-aware checks (device, behavior, request patterns) before granting access, strengthening control in dynamic AI-driven environments.

- Compliance-Ready Logging Capture detailed, identity-linked logs for every action, enabling auditability, traceability, and alignment with regulatory requirements.

Where This Applies:

Securing LLM-Based Applications When LLMs are connected to internal tools (databases, CRMs, APIs), miniOrange IAM ensures every request is authenticated and scoped. Instead of exposing backend systems via API keys, access is granted through identity-backed tokens, limiting what the model can retrieve or execute.

AI Copilots Accessing Enterprise Systems Copilots interacting with systems like ERP, HRMS, or support platforms operate under defined identities. Identity-based access for AI agents enforces role- and attribute-based policies so the copilot can only perform approved actions, such as read-only access to specific datasets or restricted workflow execution.

Automated Workflows Across APIs In multi-step workflows where agents trigger actions across different services, short-lived tokens can be issued per interaction by using a dedicated IAM solution. Each step is independently validated, ensuring that access does not persist beyond its intended scope and cannot be reused across systems.

Best Practices for Securing AI Agents

Securing autonomous systems requires tightening how identity, access, and trust are handled across every interaction.

These AI security best practices focus on reducing exposure while maintaining control over agent behavior.

- Replace API keys with OAuth tokens to move from static credentials to scoped, time-bound access control.

- Use least privilege access so each AI agent only gets the minimum permissions required for its specific task.

- Implement token expiration and rotation to limit exposure and reduce the impact of compromised credentials.

- Monitor agent activity continuously to detect unusual behavior, unauthorized access patterns, or policy violations in real time.

- Apply Zero Trust principles where every request is verified based on identity and context instead of implicit trust.

- Separate identities for each agent to ensure clear traceability, accountability, and enforceable access boundaries.

The Future: Identity-First Security for AI Ecosystems

AI systems are shifting toward environments where machine identities already outnumber human users, and the gap continues to widen as autonomous agents take on more responsibility. These agents are increasingly treated as independent actors rather than extensions of applications.

Industry direction is clear: AI agents are becoming first-class identities, operating across systems with defined permissions and governance boundaries instead of static credentials.

As this shift accelerates, API keys are steadily losing relevance in sensitive environments, replaced by identity-driven models that can enforce accountability at scale.

In this landscape, IAM is emerging as the foundation of AI governance, ensuring every agent action is tied to identity, policy, and traceability rather than implicit trust.

Conclusion

Static credentials assume controlled environments. AI agents do not operate in controlled environments.

That mismatch is what creates risk in modern AI systems, especially when API keys are reused, shared across services, or loosely governed.

API keys are outdated for AI security and cannot reliably support autonomous, cross-system execution.

IAM enables secure, scalable AI adoption by introducing identity-based control that can be enforced consistently across agents, APIs, and enterprise systems.

Secure your AI agents with identity-based access. Explore miniOrange Identity & Access Management (IAM) for modern AI security.

Leave a Comment